Improving a Gridsome website performance

How we quickly bumped Codegram's site performance with just a few easy changes

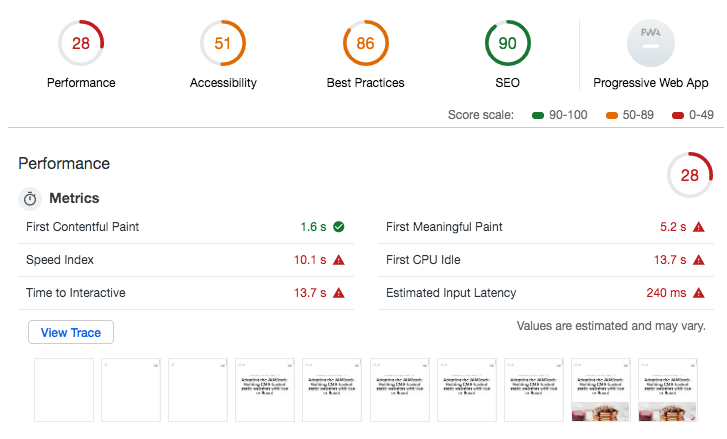

We were pretty proud of the brand new Codegram website you are visiting right now. It looks nice, has cool animations… Until someone thought of running an audit against the site!

oh no

Panic! These numbers are completely unacceptable (especially considering we are more than technology agency 🙈), so we needed to find a solution, fast. We focused on the worse numbers, accessibility and performance, so we split the tasks, and I started working on improving the performance.

Lighthouse, the tool that powers the Audits panel of Chrome DevTools, is excellent and suggests solutions that often can be applied directly, such as adding async or defer to non-critical scripts, but others require a bit (or a lot) more work. To get the most improvement out of the less amount of time, I decided to focus on the lowest hanging fruits.

Properly size images: Gridsome to the rescue

Gridsome offers a g-image component that outputs an optimized progressive image. This component loads a very light base64 blurred image by default, and, using an intersection observer, it swaps the blurred picture with the larger, higher-quality image as soon as it comes in the viewport.

You can specify width, height and quality attributes to get the most optimization possible, as well as cropping the image easily:

<g-image src="~/assets/image.png" width="500" height="120" quality="75" />This, however, only works with local, relative image paths. So what about dynamic images, specifically, the ones that come from blog posts?

The blog is built with Gridsome too and works with GraphQL. So, when you write the query, you can also pass the width, height , and quality parameters:

query BlogPost {

blogPosts: allBlogPost {

edges {

node {

// ...

image( width: 376, height: 250, quality: 75 )

}

}

}

}The only drawback is that currently, the lazy loading doesn't work with these images. It shouldn't be too difficult to implement it manually, but since the website doesn't rely too heavily on images, we'll leave it for future improvement.

Tip: There was one blog image that was not getting properly resized. Turns out that the image extension was uppercase, changing it to lowercase fixed the issue!

Avoid enormous network payloads and minimize main-thread work

The website is static, built with Gridsome, so a lot of best practices are already applied out of the box: code-splitting, prefetching… So I was unsure how to reduce bundle size.

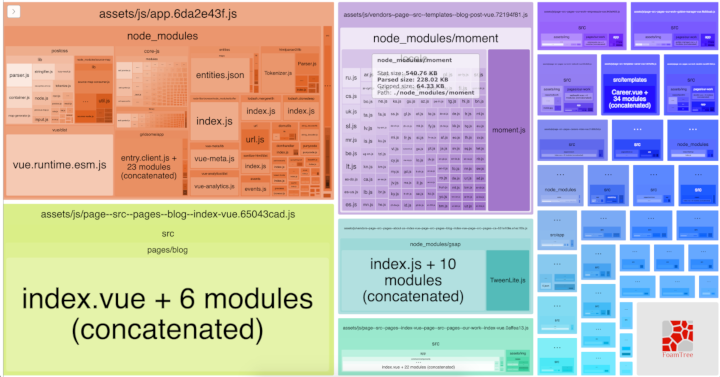

Luckily, there are tools to analyze your bundle! Webpack Bundle Analyzer lets you visualize the size of your output files with an interactive zoomable treemap. Since we're using Gridsome, we cannot edit the Webpack config to add the plugin directly, but using chainWebpack is as easy:

const BundleAnalyzerPlugin = require('webpack-bundle-analyzer')

.BundleAnalyzerPlugin

module.exports = {

// ...

chainWebpack: config => {

config

.plugin('BundleAnalyzerPlugin')

.use(BundleAnalyzerPlugin, [{ analyzerMode: 'static' }])

}

}Tip: Notice a couple of things: when using .use(BundleAnalyzerPlugin) there is no need to use new to create the plugin, as this will be done for you (see docs). I also added the { analyzerMode: 'static' } to avoid an Error: listen EADDRINUSE: address already in use 127.0.0.1:8888 that is caused by the analyzer trying to run the server twice.

As you can see, once of the biggest boxes is Moment.js. It is a great library, but we are only using it to set the format on the post date. A bit overkill, indeed. There are a lot of options to replace Moment.js, I decided to use the tiniest one, Day.js, which is more than enough to format the date nicely, and the bundle size was reduced significantly.

Other than Moment, though, there were not many opportunities. PostCSS is a huge dependency too, but sadly it's required by sanitize-html, which is required by @gridsome/transformer-remark, a Markdown transformer for Gridsome that we are using for the blog.

That's what happens when you go into node_modules rabbit hole

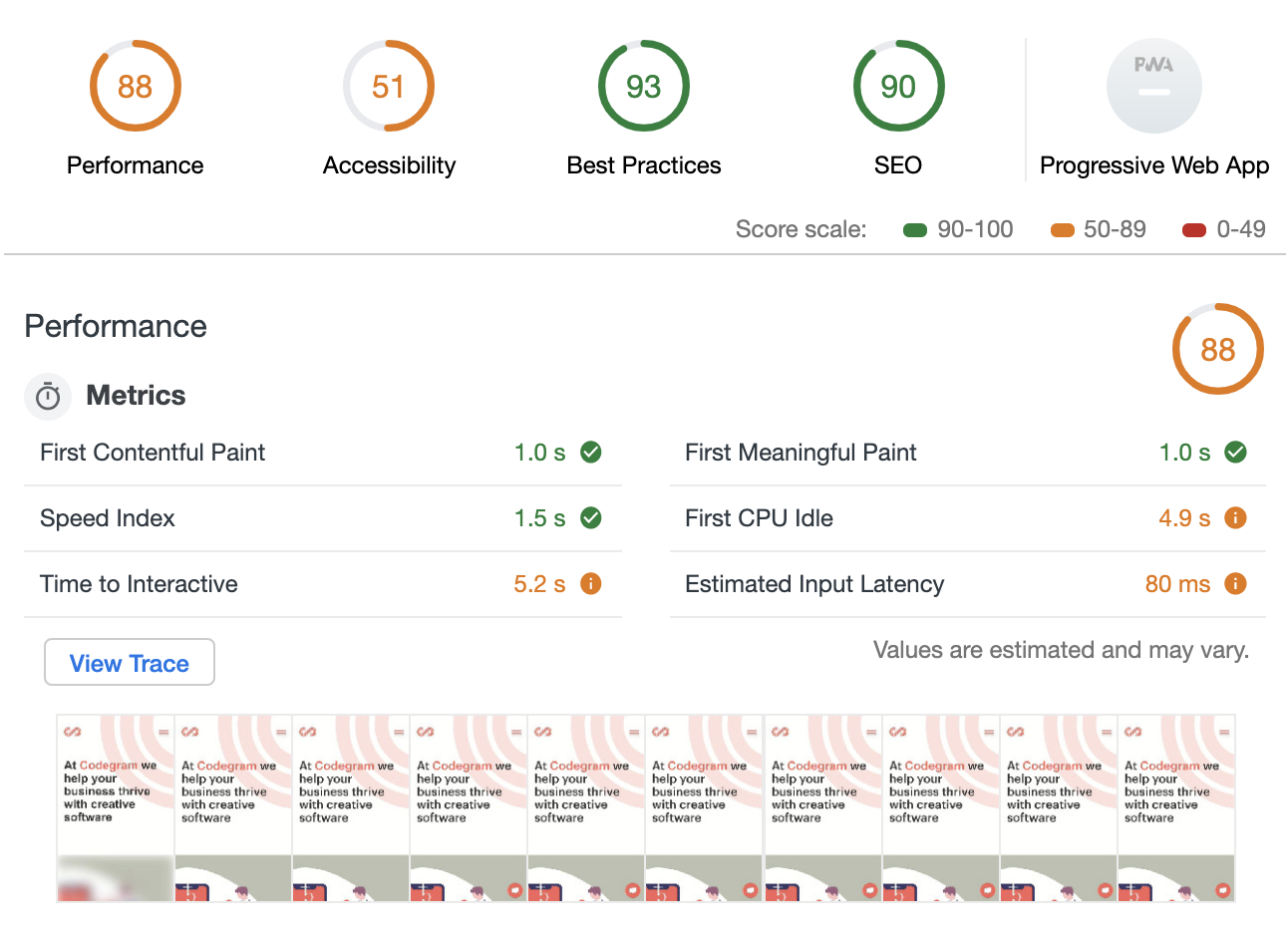

Even though, with just those two changes, we managed to improve performance significantly, up to ~88!

More opportunities

Of course, the work doesn't end here. There is still room for improvement:

Minimize main-thread work: Although, as we've just seen, the biggest dependencies are not easily removable, we could still check for smaller ones. It's not a quick task, but that's where we could gain the most: ~2.9 s.

Removing unused CSS: There are tools to analyze dead rules, but I find they are not accurate at all, and still require a lot of manual checking. That's a lot of work for just ~0.15 s gain, so that would be our last focus.

Minimize Critical Requests Depth: We could remove the fonts from the critical path. We are already using font-display: swap; to prevent FOIT (flash of invisible text). The most important part of the site is the content, so we prefer you can start reading right away with a different font, rather than waiting for a few seconds with no text at all until the proper font is loaded. However, we could defer the download of the fonts to improve performance further. Zach Leatherman has A Comprehensive Guide to Font Loading Strategies that can help to choose the best way to load the fonts.

Further improve images: We could lazy load the blog images, and optimize or change the format of some heavy PNG images. However, I'm confused about the audit tool recommending formats like JPEG 2000 or JPEG XR when Chrome doesn't support them.

Lessons learned

We've learned a lot about best practices, but the main lesson is that we need to incorporate running audits into our workflow. Performance and accessibility are not an afterthought; they should be one of the main concerns when building a website.

Stay tuned to learn how we improved the accessibility stat too!