After stabilizing the platform through refactoring and low-hanging fruit, we began reworking the most critical aspects of the system, while setting up business and system metrics throughout. By the end, we had migrated everything to a multi-region AWS deployment. We also split the playable world in regions, load-balanced after login, to offer a customized experience to users in different parts of the globe.

One of the most important structural changes was gradually porting the Rails monolith to a JSON API with an Ember app in the frontend, page by page as to avoid a fearsome big bang rewrite and continue to deliver value —we successfully delivered dozens of features while making this kind of structural changes to the whole system.

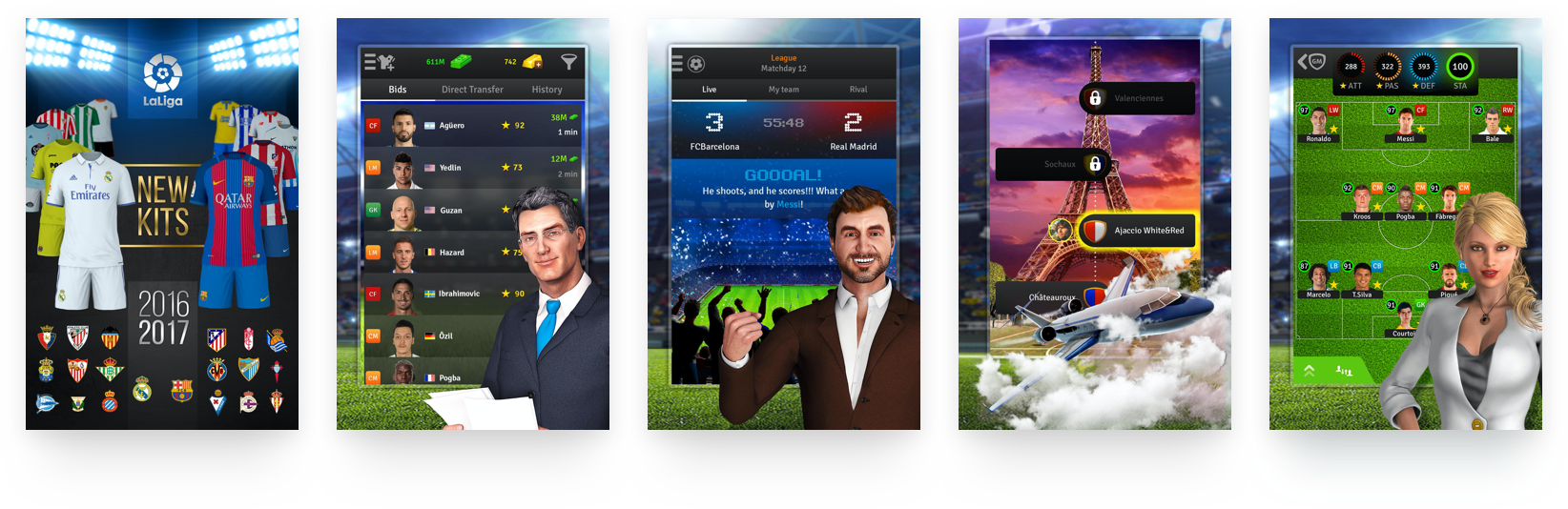

Incidentally, decoupling the application with an API was paramount to developing native mobile applications later, when we worked hand in hand with the iOS and Android developers to tailor and optimize certain endpoints for mobile.

We finally rewrote the virtual auction system entirely into a real-time application with Websockets, Ember and Ruby, and soon the revenue started flowing in with highly engaged paying customers and great retention, competing with each other to acquire the best football players.

To tie everything together, we tracked every action in the system and built an ETL to ship it to AWS Redshift, where their Business Intelligence team could analyze it to drive decisions about the product going forward. We also set up data pipelines for the sales team to get an end-to-end view of the conversion funnel throughout the gaming experience.